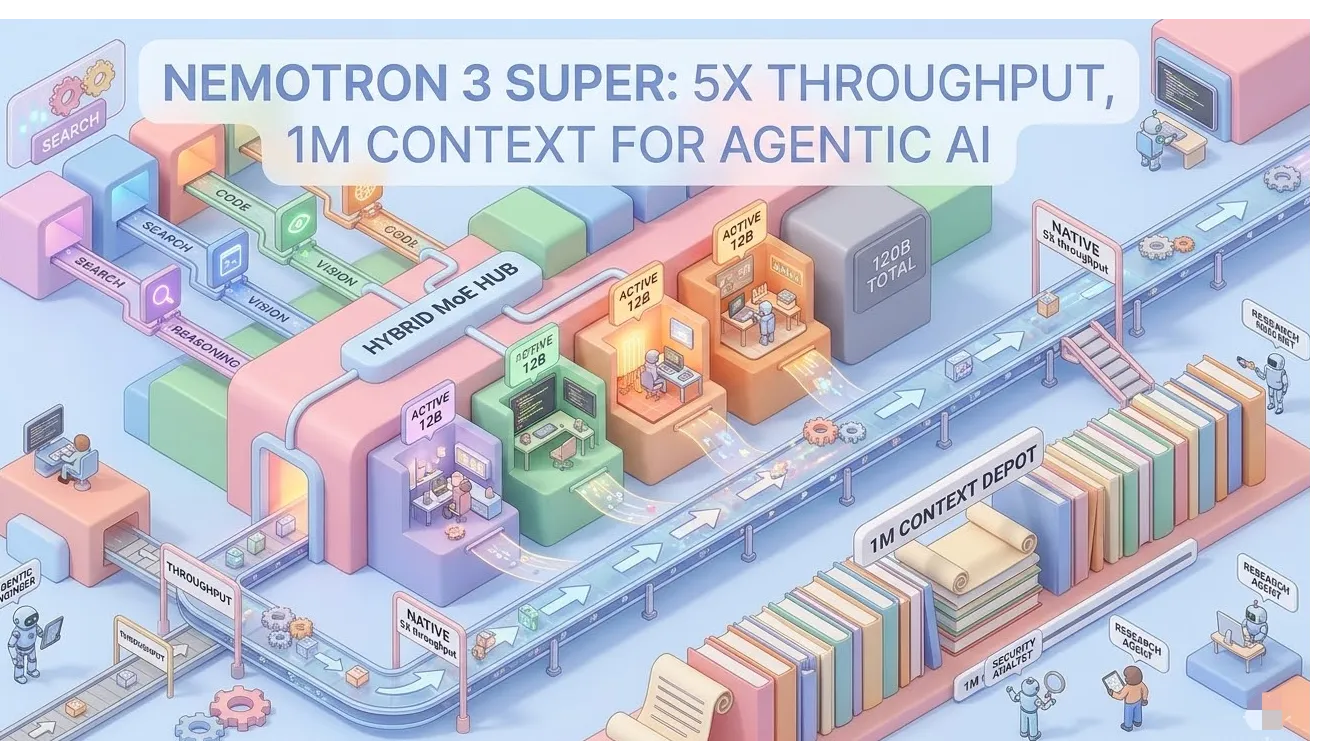

Nemotron 3 Super: NVIDIA's 120B Hybrid MoE Model Delivers 5X Throughput and 1M Context for Agentic AI

Key Takeaways

- Core Specs: 120B total parameters with 12B active via hybrid Mamba-Transformer Mixture-of-Experts (MoE) architecture and native 1-million-token context window.

- Performance Gains: Delivers up to 5x higher throughput than the prior Nemotron Super, ~450 tokens/second output speed, and up to 2.2x faster inference than GPT-OSS-120B while matching or exceeding accuracy on agentic tasks.

- Key Innovations: Latent MoE for 4x specialist experts at single-expert cost, Multi-Token Prediction (MTP) layers enabling native speculative decoding (3x wall-clock speedup), and native NVFP4 pretraining for 4x faster inference on Blackwell GPUs with zero accuracy loss.

- Benchmark Leadership: Tops open models on PinchBench (85.6%), SWE-Bench Verified (60.47%), RULER 1M (91.75%), and powers #1 on DeepResearch Bench; Artificial Analysis Intelligence Index score of 36.

- Accessibility: Fully open weights, datasets, and recipes on Hugging Face; deployable via NVIDIA NIM, runs quantized on 64GB hardware, and available across major clouds.

What Is NVIDIA Nemotron 3 Super?

NVIDIA released Nemotron 3 Super on March 11, 2026, as the flagship model in the Nemotron 3 family. Designed specifically for complex multi-agent systems, this 120B-total / 12B-active parameter open model targets real-world agentic workloads such as autonomous software engineering, cybersecurity orchestration, and long-form research.

Unlike dense models that activate every parameter for every token, Nemotron 3 Super uses sparse activation combined with hybrid efficiency layers. This directly tackles two major agentic pain points: the "thinking tax" (excessive compute for every subtask) and "context explosion" (histories ballooning 15x in multi-turn tool-calling workflows).

The result is a model that maintains frontier-level reasoning accuracy while running at production scale on fewer GPUs.

Architectural Deep Dive: Hybrid Mamba-Transformer MoE Explained

Benchmarks indicate the hybrid backbone delivers both linear-time long-context handling and precise associative recall. Here's how the components work together:

- Mamba-2 Layers: Provide 4x memory and compute efficiency with linear scaling, enabling the native 1M-token context window without quadratic memory blowup.

- Transformer Attention Anchors: Interleaved periodically for global long-range dependencies and high-accuracy tool calling.

- Mixture-of-Experts (MoE): 512 experts per layer with top-22 routing; only 12B parameters activate per token.

- Latent MoE Innovation: Projects tokens into a low-rank latent space before expert routing. This allows 4x more specialized experts (e.g., Python vs. SQL routing) at the compute cost of one, boosting accuracy without bandwidth penalties.

- Multi-Token Prediction (MTP) Layers: Predict multiple future tokens simultaneously with shared-weight heads. This improves chain-of-thought quality and enables native speculative decoding for up to 3x faster generation in structured tasks like code or API calls.

- Native NVFP4 Pretraining: The entire model was trained in NVIDIA's 4-bit floating-point format optimized for Blackwell. Post-training quantization to FP8 or NVFP4 preserves 99.8% of BF16 accuracy while cutting inference time 4x versus Hopper FP8.

These elements combine to deliver over 5x throughput versus the previous Nemotron Super and 7.5x versus Qwen3.5-122B on equivalent hardware.

Benchmark Performance: Data-Driven Comparisons

Analysis of official and independent evaluations shows Nemotron 3 Super leads open models in the 100B+ class for agentic efficiency while remaining competitive on general reasoning.

Key Scores:

- PinchBench (OpenClaw agent brain): 85.6% – best open model.

- SWE-Bench Verified (OpenHands): 60.47% (vs. Qwen3.5-122B at 66.40%, GPT-OSS-120B at 41.9%).

- Terminal Bench Hard: 25.78%.

- RULER (1M context): 91.75% (vs. Qwen3.5 at 91.33%, GPT-OSS at 22.30%).

- HMMT Feb 2025 (no tools): 93.67% (vs. Qwen3.5 at 91.40%).

- GPQA (no tools): 79.23%.

- Artificial Analysis Intelligence Index: 36 (well above open-model average of 15; ranks #2 among comparables).

Throughput Metrics (B200 GPUs, 8K input / 64K output):

- 449–478 output tokens/second – fastest in class.

- 2.2x higher than GPT-OSS-120B.

- 7.5x higher than Qwen3.5-122B.

Community feedback and independent runs (Unsloth, Artificial Analysis) confirm the model remains highly accurate after quantization and excels in long-context agent workflows where dense models lose coherence.

Deployment Options and Advanced Optimization Tips

Nemotron 3 Super ships as a production-ready NVIDIA NIM microservice and is available immediately on:

- Hugging Face (BF16, FP8, NVFP4, and GGUF variants)

- build.nvidia.com

- Perplexity, OpenRouter, and partner platforms (DeepInfra, Fireworks, Together AI, etc.)

- Major clouds: Google Vertex AI, Oracle OCI; soon AWS Bedrock and Azure

Local and On-Prem Deployment:

- Runs quantized on a single 64GB GPU or unified memory device using Unsloth GGUF or NVFP4.

- NVIDIA NIM provides drop-in OpenAI-compatible endpoints with optimized kernels for Latent MoE and MTP.

Advanced Tips:

- Use SGLang for multi-agent tool-calling orchestration (leverages MTP for speculative decoding).

- vLLM for high-throughput batch inference.

- TensorRT-LLM for lowest-latency production serving with custom Latent MoE kernels.

- Fine-tune with NVIDIA NeMo (LoRA/SFT or GRPO RL) on domain-specific agent trajectories for further gains.

Edge Cases, Common Pitfalls, and How to Avoid Them

Long-Context Edge Cases: The 1M window shines for full codebase ingestion or multi-document research but can increase latency if prompts are not trimmed. Mitigation: Implement sliding-window summarization or agentic memory pruning before exceeding 128K active tokens.

Verbosity Pitfall: The model generates significantly more output tokens than peers (110M tokens in Artificial Analysis eval). Solution: Add explicit "concise mode" instructions or use post-processing with a smaller summarizer model.

Hardware Pitfall: NVFP4 delivers maximum speed only on Blackwell GPUs. On Hopper or consumer cards, default to FP8 quantization to maintain accuracy while still achieving 4x gains over BF16 baselines.

Multi-Agent Scaling: In systems with 10+ collaborating agents, context explosion remains a risk. Best practice: Route simple subtasks to lighter models (e.g., Nemotron 3 Nano) and reserve Super for high-stakes reasoning and long-memory coordination.

Fine-Tuning Caution: Always calibrate quantization after LoRA training; the provided NeMo recipes include post-training quantization steps to avoid drift.

Conclusion

NVIDIA Nemotron 3 Super represents a major leap in open agentic AI infrastructure. Its hybrid architecture, combined with MTP and Latent MoE innovations, solves the efficiency and coherence challenges that have limited multi-agent adoption at scale. Organizations building autonomous coding agents, cybersecurity triaging systems, or deep-research workflows now have a production-grade open model that delivers frontier reasoning at dramatically lower cost and latency.

Access the model today on build.nvidia.com or download quantized weights from Hugging Face. Start prototyping your next agentic system and experience the 5x throughput advantage firsthand.